Langfuse

Rate this Tool

Average Score

Total Votes

Select your score (1-10):

Detail Information

What

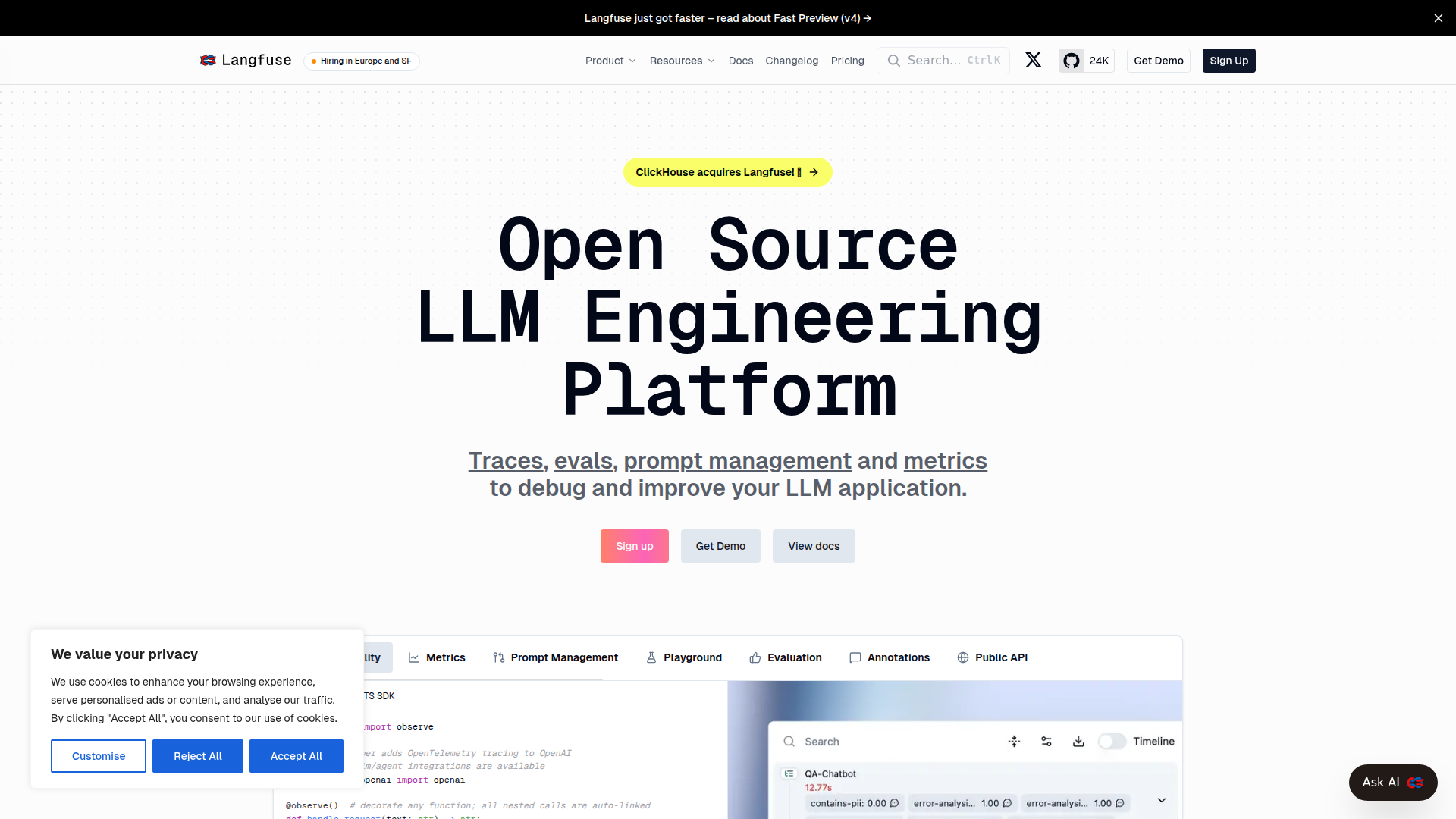

Langfuse is an open-source LLM engineering platform for teams building LLM applications and agents. Based on the page, it focuses on tracing, evaluation, prompt management, and metrics so teams can debug behavior, inspect failures, and improve application quality over time.

It appears to serve developers and AI product teams working with complex LLM workflows across different models and libraries. The core workflow is instrumenting an app with SDKs or OpenTelemetry, capturing traces and observations, reviewing prompts and outputs, and using evaluations and metrics to refine prompts, agents, and datasets.

Features

- LLM observability and tracing: Captures complete traces of LLM applications and agents, helping teams inspect failures and understand execution paths.

- OpenTelemetry-based instrumentation: Supports OpenTelemetry and provides a drop-in wrapper pattern, which can simplify adding tracing to existing code.

- Prompt management: Includes prompt management capabilities so teams can organize and iterate on prompts as part of the development workflow.

- Evaluation tools: Supports evals, annotations, and dataset-building workflows, which are useful for structured quality review and regression testing.

- Metrics and dashboards: Provides metrics for monitoring LLM application behavior and performance, though the page does not fully specify every dashboard or reporting function.

- Broad developer ecosystem support: Offers Python and JS/TS SDKs, a public API, and integrations or support for frameworks such as OpenAI, LangChain, LangGraph, LlamaIndex, CrewAI, DSPy, Semantic Kernel, and others.

Helpful Tips

- Prioritize instrumentation early: Products like this are most useful when tracing is added at the start of development, before agent logic and prompt chains become hard to diagnose.

- Validate integration depth by framework: The page lists many supported libraries, but teams should confirm whether they need native integration, OpenTelemetry support, or custom API-based instrumentation.

- Use evals with real failure cases: The strongest value usually comes from turning traced production issues into evaluation datasets for repeated testing.

- Plan self-hosting versus hosted use deliberately: Langfuse emphasizes both open source and self-hosting options, so deployment choice should reflect data governance, team ops capacity, and performance requirements.

- Check maturity of specific features: The changelog shows rapid product development, which is useful for innovation but means buyers should verify the current status of beta or newly released capabilities.

OpenClaw Skills

Langfuse could fit well into the OpenClaw ecosystem as an observability and evaluation layer for AI agents and production LLM workflows. A likely use case is an OpenClaw skill that automatically routes agent runs, tool calls, prompts, outputs, and evaluation events into Langfuse for trace analysis, prompt iteration, and quality monitoring. The page supports this general direction through its SDKs, public API, and OpenTelemetry foundation, but it does not explicitly confirm a native OpenClaw integration.

This combination could enable OpenClaw agents for AI operations, prompt QA, regression testing, and incident review. For example, an OpenClaw workflow could detect low-quality outputs, group failures by prompt version or tool path, trigger dataset creation, and assign remediation tasks to engineering or product teams. In professions building internal copilots, customer support automation, or multi-agent enterprise workflows, that would likely make LLM systems easier to audit, improve, and operate at scale.

Embed Code

Share this AI tool on your website or blog by copying and pasting the code below. The embedded widget will automatically update with the latest information.

<iframe src="https://www.aimyflow.com/ai/langfuse-com/embed" width="100%" height="400" frameborder="0"></iframe>