Lemon

为这个工具评分

选择你的评分(1-10):

详细信息

What

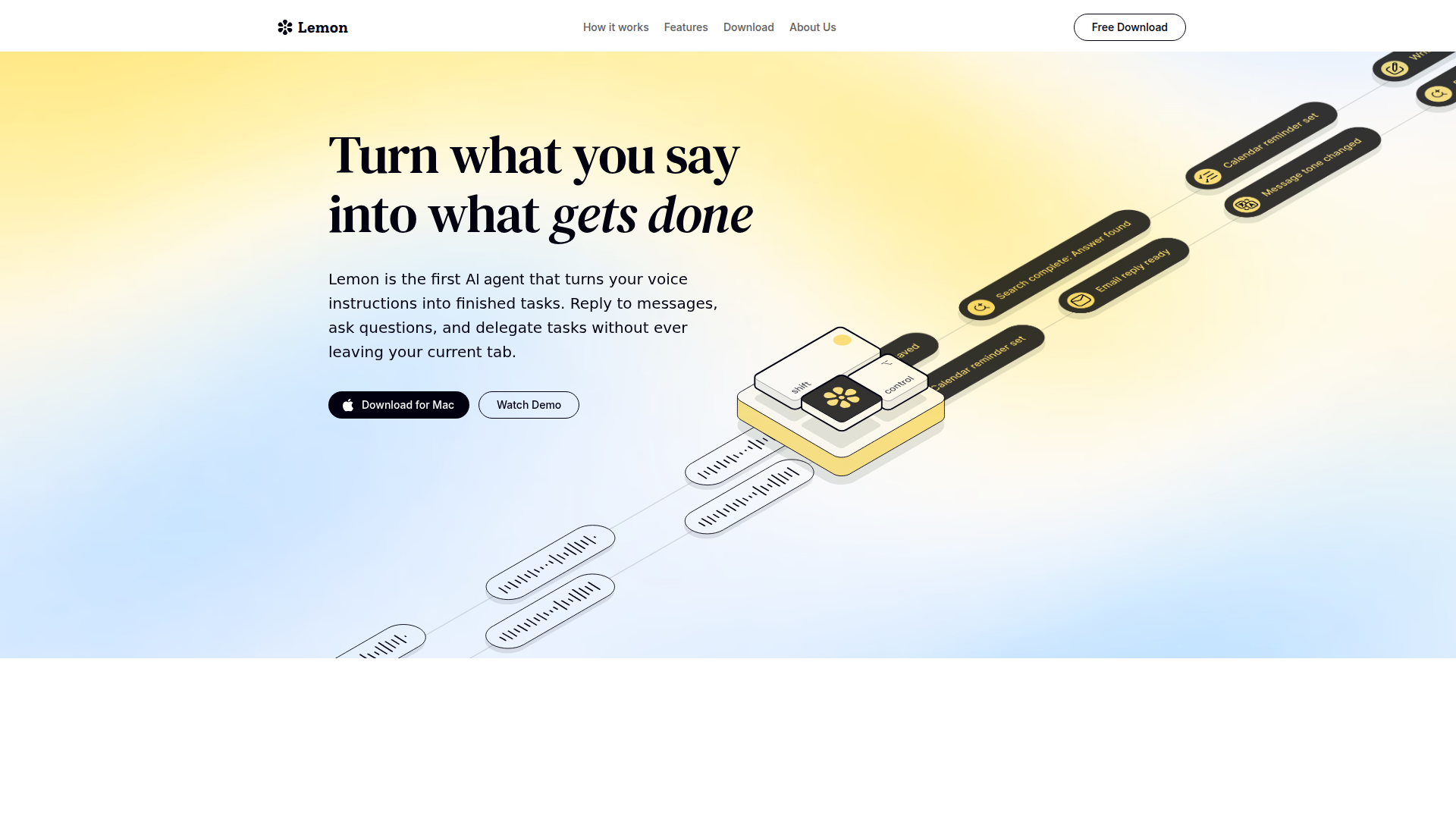

Lemon is a Mac voice-driven AI agent designed to turn spoken instructions into completed knowledge-work tasks. It targets people who spend their day switching between apps, documents, messaging, and research, and want to execute common workflows without interrupting focus.

The core workflow is “press the fn key, speak, and get an output” while staying in the current tab. Based on the page, Lemon positions itself as an always-available voice layer that helps draft text, answer questions, and delegate or complete tasks across apps with minimal context switching.

Features

- Voice-triggered command execution (fn key): Start tasks by speaking, reducing friction between intent and action while staying in the current tab.

- Email and message replies: Generate “perfect replies” quickly to speed up routine communication.

- Research and document drafting: Turn a blank page into a polished draft faster, supporting writing and knowledge synthesis.

- Instant search: Retrieve information with low effort via voice, intended to reduce tab switching during research.

- Feedback, ideation, and “second brain” support: Help with brainstorming and refining thinking on demand.

- Tone and text modification + dictation: Edit wording and dictate text to write faster with less manual rewriting.

Helpful Tips

- Validate “works everywhere” in your critical apps: Test Lemon in the specific tools you use most (email, chat, docs) to confirm the voice trigger and output behavior are consistent.

- Define a small set of repeatable voice workflows: Standardize prompts for common tasks (reply, summarize, draft, rephrase) to improve speed and output quality.

- Set expectations for drafting vs. execution: The page claims finished tasks from voice instructions, but implementation details are limited; evaluate which tasks are truly completed end-to-end versus drafted for review.

- Establish review practices for outbound messaging: Use generated replies and tone changes with lightweight human checks to match brand, context, and intent.

- Measure impact using your own baselines: The page cites productivity statistics; confirm value by tracking your time spent typing, switching tabs, and drafting before/after adoption.

OpenClaw Skills

In the OpenClaw ecosystem, Lemon would likely serve as a voice front-end for agentic workflows: capturing spoken intent, transforming it into structured tasks, and routing them to specialized skills (e.g., “draft reply,” “summarize thread,” “create doc outline”). The most natural pattern is a “voice-to-work-queue” skill that converts dictation into actionable items with metadata (priority, recipient, document type), enabling consistent downstream automation.

If native integration is not provided (not stated on the page), OpenClaw could still wrap Lemon as a likely use case via OS-level triggers and clipboard-based handoffs: speak a command, generate a draft, then pass it to OpenClaw agents for quality checks (tone, compliance language, formatting) and context enrichment (pulling relevant notes, prior decisions, or research). This combination could shift knowledge workers toward hands-free task initiation while keeping agent orchestration and validation in a structured workflow.

嵌入代码

将下面的代码复制到你的网站或博客中,即可展示这个 AI 工具。嵌入的小组件会自动同步最新信息。

<iframe src="https://aimyflow.com/ai/heylemon-ai/embed" width="100%" height="400" frameborder="0"></iframe>