cedana

Rate this Tool

Average Score

Total Votes

Select your score (1-10):

Detail Information

What

Cedana is a compute orchestration platform for GPU and CPU workloads. It is designed for teams running AI inference, AI training, agents, gaming infrastructure, and HPC workloads that need better throughput, lower interruption risk, and more flexible use of on-premise and multi-cloud infrastructure.

The product extends existing orchestration environments such as Kubernetes and SLURM rather than replacing them. Based on the page, its core workflow is to schedule, checkpoint, migrate, resume, and fail over stateful workloads in real time according to price, performance, SLAs, and resource availability, with a strong focus on reliability and utilization.

Features

- Real-time workload scheduling and migration: Cedana matches workloads to available resources based on price, performance, SLAs, and capacity to improve throughput and responsiveness.

- System-level checkpointing and restore: It continuously saves workload state so jobs can resume after GPU or CPU failures without starting over.

- Support for stateful workload failover: Automatic failover helps preserve progress for long-running and mission-critical jobs such as training, inference, and agents.

- Extension of existing orchestrators: The platform is described as working with Kubernetes, Kueue, KServe, Kubeflow, SLURM, and Ray, which helps teams adopt it within current environments.

- Elastic scaling and downscaling: Cedana can scale workloads and clusters up or down, including preempting and saving workloads so resources can be reduced without losing progress.

- Live migration and dynamic resizing: The site highlights GPU live migrations and resizing workloads onto more suitable instances without interruption, which can improve utilization and placement efficiency.

Helpful Tips

- Verify fit by workload type: Cedana appears most relevant for stateful, long-running, or interruption-sensitive compute jobs where checkpointing and migration deliver clear operational value.

- Assess orchestration maturity first: Organizations already using Kubernetes, SLURM, or adjacent ML/HPC tooling are likely to have a faster path to evaluation because Cedana is positioned as an extension layer.

- Validate claims in a controlled environment: The site presents performance and utilization improvements, but buyers should confirm expected gains against their own workload mix, failure patterns, and infrastructure topology.

- Map adoption to operational pain points: The strongest use cases seem to be spot usage, failover, zero-downtime upgrades, and dynamic resizing, so prioritization should start with the most expensive or failure-prone workflows.

- Review checkpointing behavior carefully: For distributed training and inference systems, implementation teams should examine checkpoint frequency, resume behavior, and operational overhead in their specific stack.

OpenClaw Skills

Cedana could likely pair well with OpenClaw in infrastructure operations, AI platform engineering, and workload governance workflows. A likely use case would be OpenClaw agents that monitor queue depth, SLA risk, spot market conditions, and cluster health, then trigger Cedana-based migration or scaling policies through documented APIs and orchestration layers. The site does not confirm a native OpenClaw integration, so this should be treated as a workflow design opportunity rather than a built-in capability.

In practice, OpenClaw skills could be built for capacity planning, failure-response automation, cost-aware job placement, and workload-specific runbooks across training, inference, and HPC environments. That combination could shift platform teams from manual cluster operations toward policy-driven compute management, with OpenClaw handling decision logic and operator workflows while Cedana handles stateful checkpointing, migration, and workload continuity.

Embed Code

Share this AI tool on your website or blog by copying and pasting the code below. The embedded widget will automatically update with the latest information.

<iframe src="https://www.aimyflow.com/ai/cedana-ai/embed" width="100%" height="400" frameborder="0"></iframe>

Explore Similar Tools

PixieBrix: Extend your apps, accelerate work

PixieBrix is a low-code enterprise platform for building, deploying, and managing AI-enabled browser extensions that integrate with web apps, data, and APIs to automate workflows, mainly for operations, customer support, and IT teams. In AI-driven workplaces, it helps support leaders and process owners embed context-aware guidance and assistance directly in existing tools, reducing app switching and standardizing work.

SurfSense - Open Source NotebookLM Alternative for Teams

SurfSense is an open source NotebookLM alternative for teams that connects LLMs to internal knowledge sources, syncs data from tools like Notion, Drive, and Gmail, and enables real-time collaborative chat, search, and knowledge management for enterprise users. For knowledge workers, operations teams, and IT leaders, it can improve how shared documents and company knowledge are searched, cited, and discussed in collaborative AI workflows.

OpenClaw VPS Hosting & Deploy OpenClaw Multi Agents in 60s - MyClaw.Host

MyClaw.Host is a hosting platform that provides pre-configured VPS infrastructure for deploying and managing OpenClaw AI agents with one-click setup, mainly for users who need dedicated agent hosting without manual server administration. For AI operations, support, and automation teams, it can reduce setup overhead and simplify running multiple agents across web chat and messaging channels from one dashboard.

Aiqbee - Universal AI Memory Platform | Enterprise Knowledge for Any LLM

Aiqbee is an enterprise AI memory platform that gives any LLM or AI tool persistent organizational context by centralizing company knowledge, connecting it to tools like Teams and IDEs, and adding governance controls, mainly for businesses managing AI use across teams. For IT, operations, support, and development functions, it can reduce repeated prompting and improve consistency by making shared knowledge available across approved AI workflows.

Enterprise Digital Management Solutions | Serviceaide

Serviceaide is an enterprise digital management platform with AI-powered service management, change management, knowledge management, and ticket deflection tools that help organizations automate support and resolve issues faster, mainly for enterprise service and support teams. For ITSM, service desk, HR, facilities, and governance teams, its agentic AI and self-service capabilities can reduce repetitive tickets and improve first-contact resolution.

Team9 - Bring OpenClaw AI Agent to Your Team | Part of Moltbook Ecosystem

Team9 is an AI workspace that lets teams deploy managed OpenClaw AI agents with no setup, hire AI staff, and collaborate on tasks in one place, mainly for organizations that want private, infrastructure-controlled automation. For IT, operations, engineering, and knowledge management teams, it can streamline recurring workflows like reporting, monitoring, documentation, and GitHub operations while keeping sensitive context on their own systems.

Raghim AI - Enterprise AI Chatbots with Complete Data Sovereignty

Raghim AI is an enterprise AI chatbot platform that helps organizations deploy self-hosted or managed chatbots for document Q&A, natural language database querying, and customer support while keeping data inside their infrastructure, making it mainly suited for privacy-conscious enterprises in regulated environments. In AI workflows, it can help IT, security, compliance, and operations teams adopt chatbots more safely by combining retrieval, OCR, integrations, and governance controls within one controlled deployment model.

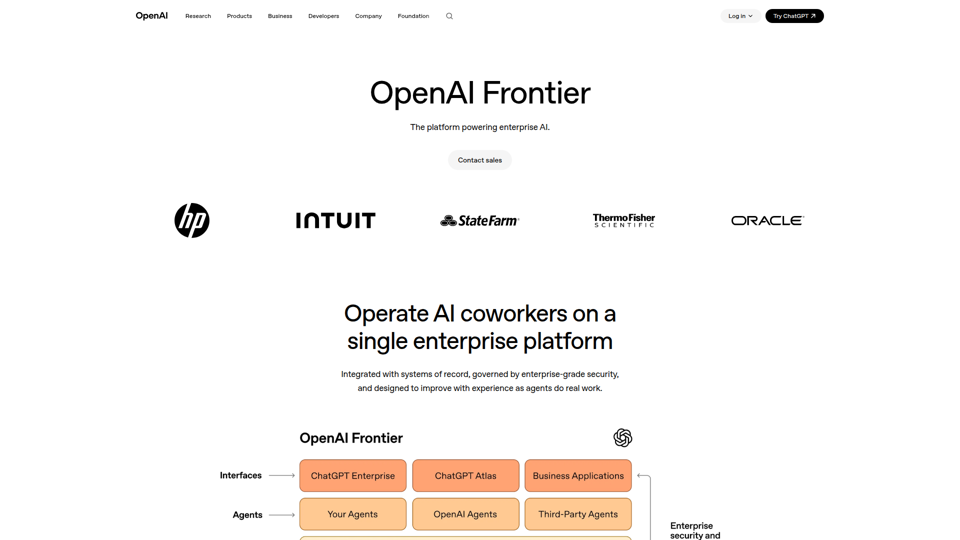

OpenAI Frontier | Enterprise platform for AI agents | OpenAI

OpenAI Frontier is an enterprise platform for deploying secure, production-ready AI agents that connect to systems of record, automate core workflows, and support teams in roles like data analysis, forecasting, software engineering, customer support, and procurement. For IT, operations, and business leaders, it can make AI work more reliable in production through governance, auditability, and feedback loops that help agents improve over time.