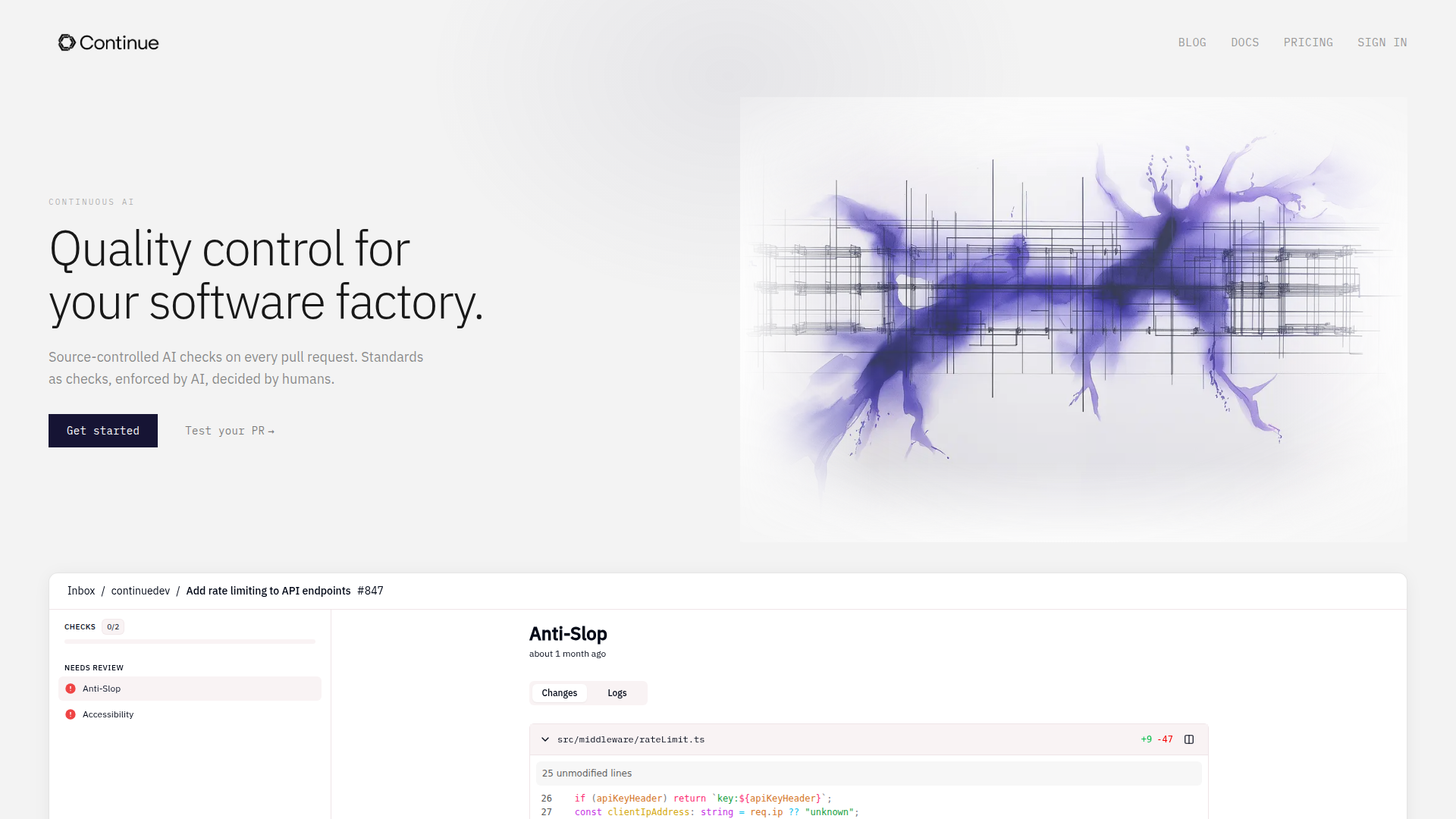

Continue • Quality control for your software factory. | Continue

Rate this Tool

Average Score

Total Votes

Select your score (1-10):

Detail Information

What

Continue is a code quality control product for software teams that want AI-based checks on every pull request. It appears to serve engineering organizations using GitHub and a pull-request workflow, with the goal of enforcing team-defined standards consistently as development velocity increases.

The core workflow is source-controlled AI checks written as markdown in the repository, then executed as native GitHub status checks on pull requests. Its positioning is likely more focused than general AI code review: human-defined standards are enforced by AI, with suggested fixes when code does not meet those standards.

Features

- AI checks on every pull request — Reviews run automatically on PRs, helping teams apply quality controls continuously instead of relying only on manual review.

- Source-controlled standards — Checks are written as markdown in the repo, which makes review criteria versioned, visible, and maintainable alongside code.

- Native GitHub status checks — Results appear in the pull request workflow as standard checks, reducing process friction for teams already operating in GitHub.

- Suggested fixes for failed checks — When code misses the defined standard, the system can propose fixes, which may reduce reviewer effort on mechanical issues.

- Human-defined enforcement scope — The product emphasizes catching only what the team explicitly specifies, which supports predictable and policy-driven review outcomes.

- Specialized quality checks — The page references examples such as anti-slop, accessibility, and code security review, suggesting teams can define targeted review categories.

Helpful Tips

- Start with narrow, high-value standards — For this type of product, the strongest early use cases are repetitive review rules that are easy to define and expensive to enforce manually.

- Treat checks like engineering policy — Because standards live in the repo, teams should manage them with code review, ownership, and change history rather than as informal guidance.

- Separate mechanical checks from architectural review — AI-enforced rules are most useful for consistency and policy adherence; human reviewers should still handle tradeoffs, design judgment, and context-heavy decisions.

- Pilot on one team or repository first — A controlled rollout helps validate signal quality, tune rule wording, and prevent unnecessary friction before broader adoption.

- Define acceptance and rejection criteria clearly — The effectiveness of this model depends heavily on how precisely checks are written; vague standards are likely to create weaker outcomes.

OpenClaw Skills

Continue could fit well into the OpenClaw ecosystem as a trigger point for software delivery workflows. A likely use case would be OpenClaw skills that watch pull request events, classify failed Continue checks by category, route issues to the right engineering owners, draft remediation tasks, and summarize recurring quality problems across repositories. If Continue exposes structured outputs through GitHub status checks, OpenClaw agents could use that signal to automate triage and reporting even without a confirmed native integration.

This combination could be especially useful for engineering managers, platform teams, and developer productivity functions. OpenClaw workflows could likely turn repeated Continue findings into updated coding standards, onboarding guidance, backlog items, or architecture review inputs. In practice, that would shift some review work from ad hoc manual enforcement toward a more systematic software factory model, where policy definition, exception handling, and continuous improvement become the main human responsibilities.

Embed Code

Share this AI tool on your website or blog by copying and pasting the code below. The embedded widget will automatically update with the latest information.

<iframe src="https://www.aimyflow.com/ai/continue-dev/embed" width="100%" height="400" frameborder="0"></iframe>

Explore Similar Tools

Free AI Photo Editor: Edit & Generate Image Online | Pokecut

Pokecut is an AI photo editor that helps users remove backgrounds, enhance images, and generate visuals online, mainly for ecommerce sellers, marketers, and creators who need quick design-ready assets. It speeds up routine image production so visual teams can create polished content with less manual editing.

Qoder - The Agentic Coding Platform

Qoder is an agentic coding platform that helps developers understand codebases and execute software tasks with AI agents, mainly for professional software engineers and development teams. It improves engineering throughput by combining strong code context with advanced models for more reliable task completion.

Seedance 2.0

Seedance 2.0 is ByteDance's AI video generation model designed to create high-quality videos from prompts and multimodal inputs, mainly for creators, developers, and media teams. In the AI era, it helps visual content roles turn ideas into production-ready motion assets with far less manual editing effort.

Struct | Automate your on-call runbook

Struct is an AI on-call agent that investigates engineering alerts and bugs by analyzing logs, metrics, traces, and codebases, mainly for software engineers and SRE teams. In the AI era, it helps incident responders shorten triage time by delivering root-cause findings and suggested fixes directly in workflows.

Handit.ai — The Open Source Engine that Auto-Improves Your AI Agents

Handit.ai is an open-source optimization engine that evaluates AI agent decisions, generates improved prompts and datasets, and A/B tests changes for teams building and operating AI agents. It helps AI engineers and product teams improve agent quality faster while keeping tighter control over production behavior.

Free AI Grammar Checker - LanguageTool

LanguageTool is an AI-powered grammar and writing assistant that helps users check grammar, spelling, punctuation, and style across more than 30 languages, mainly for students, professionals, and multilingual teams. It helps writing-heavy roles communicate more clearly and edit faster at scale.

Trace

Trace is a software tool designed to support digital workflows, likely focused on helping teams organize, monitor, or analyze work more effectively. In the AI era, tools that centralize operational visibility help technical and business roles make faster decisions with less manual follow-up.

The AI for Problem Solvers | Claude by Anthropic

Claude by Anthropic is an AI assistant for problem solvers that helps users tackle complex work such as writing, coding, data analysis, research, and organizing tasks, mainly for professionals, developers, and teams handling difficult projects. In AI-enabled workflows, it can help knowledge workers and software teams move faster from analysis to execution while keeping people in control of approvals and file access.