Video Diffusion Models

Rate this Tool

Average Score

Total Votes

Select your score (1-10):

Detail Information

What

Video Diffusion Models is a research system for generating video with diffusion models. It targets machine learning researchers and teams working on generative media, especially those exploring text-conditioned video generation, unconditional video generation, and methods for extending standard image diffusion architectures to video.

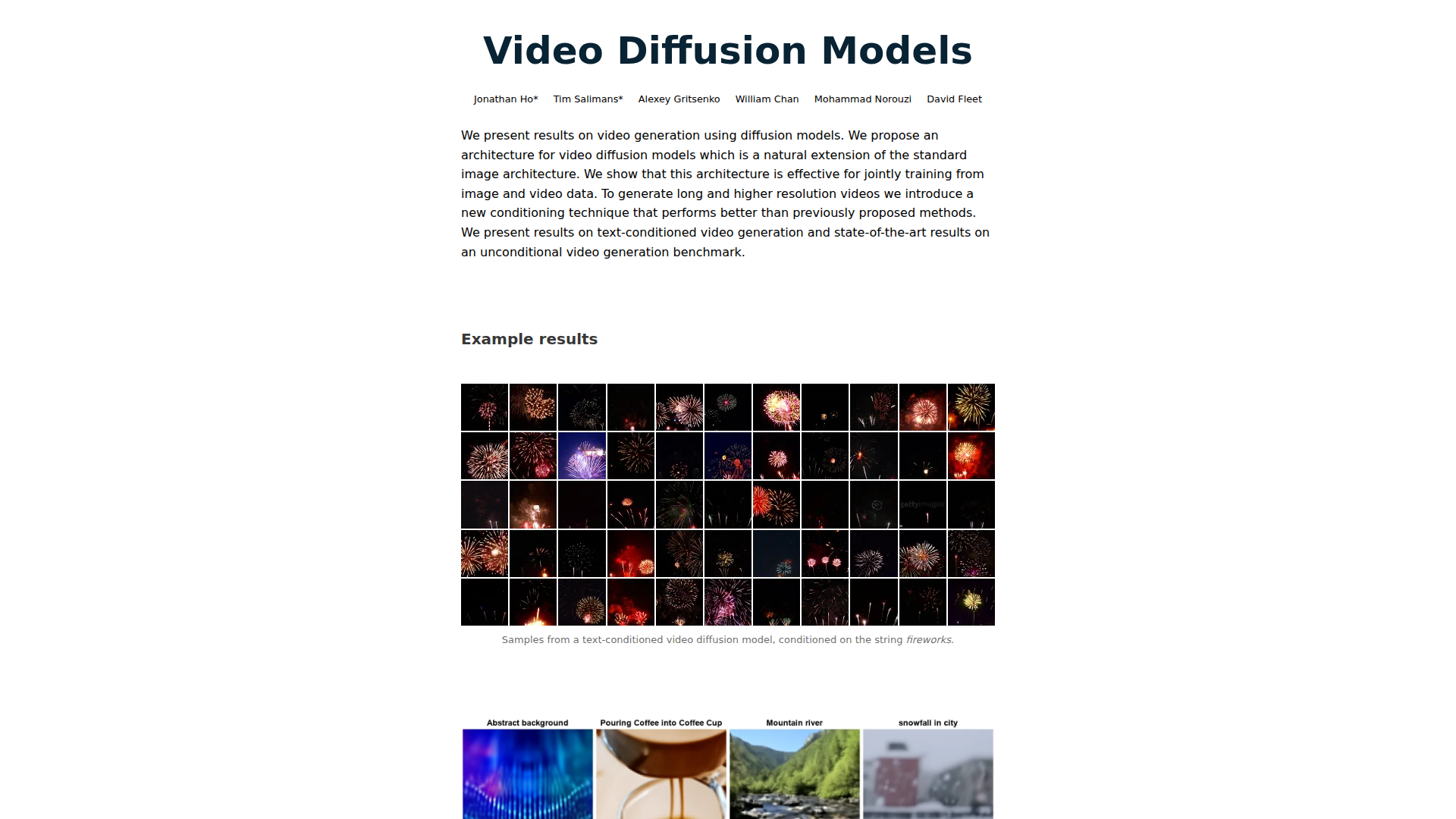

The core workflow is to train a diffusion model on fixed-length video frame blocks, optionally combine image and video training, and then extend generation to longer or higher-resolution videos through a conditioning method during sampling. Based on the page content, this is best understood as a research approach and model architecture rather than a packaged end-user product.

Features

- Video generation with diffusion models: Applies Gaussian diffusion modeling to video, showing that high-quality video can be produced with relatively limited changes to standard image diffusion setups.

- Factorized space-time UNet architecture: Extends the common 2D image UNet to video in a way designed to handle spatiotemporal data within accelerator memory constraints.

- Joint image-video training: Supports training across both image and video objectives, which the authors report as important for improving video sample quality.

- Text-conditioned video generation: Generates videos from text prompts, with examples shown for prompt-conditioned outputs.

- Autoregressive extension for longer videos: Repurposes a trained fixed-block model to generate videos beyond its native frame window by operating block-autoregressively over frames.

- Gradient-based conditioning method: Improves consistency with conditioning information during sampling and is presented as better than prior replacement-style methods for temporal coherence and higher-resolution extension.

Helpful Tips

- Treat this as a research foundation, not a turnkey platform: The page presents methods and results, but it does not describe deployment tooling, APIs, or production controls.

- Check fit for your use case: The strongest evidence here is for generative video research, especially unconditional benchmarks and text-conditioned generation, rather than editing, commercial asset production, or enterprise workflow management.

- Evaluate temporal consistency carefully: For video systems, coherence across frames matters as much as single-frame quality, and this work specifically emphasizes conditioning methods that improve that property.

- Consider mixed image-video training strategies: If reproducing or adapting this approach, the reported benefit of joint image-video training may be important when video-only data is limited or noisy.

- Review the full paper before implementation: The page is a summary, so practical details on training setup, limitations, and benchmarking likely require the cited paper.

OpenClaw Skills

Within the OpenClaw ecosystem, this work would most likely serve as a model-centric building block for generative video workflows rather than a standalone business application. Likely skills could include prompt-to-video experimentation agents, benchmark evaluation workflows, dataset preparation pipelines for image-video joint training, and research copilots that compare sampling strategies, temporal coherence, and conditioning behavior across runs. These are inferred use cases; the page does not state any native OpenClaw integration.

For media R&D teams, AI labs, or creative tooling companies, an OpenClaw-based layer around this model could change work by making video generation more operational and testable. Likely agents could automate prompt sweeps, run quality reviews on generated clips, manage block-autoregressive long-video generation jobs, and summarize experimental findings for researchers or product teams. In practice, that would shift the model from a paper result into a repeatable workflow component for prototyping and evaluation in generative video pipelines.

Embed Code

Share this AI tool on your website or blog by copying and pasting the code below. The embedded widget will automatically update with the latest information.

<iframe src="https://www.aimyflow.com/ai/video-diffusion-github-io/embed" width="100%" height="400" frameborder="0"></iframe>

Explore Similar Tools

Free AI Photo Editor: Edit & Generate Image Online | Pokecut

Pokecut is an AI photo editor that helps users remove backgrounds, enhance images, and generate visuals online, mainly for ecommerce sellers, marketers, and creators who need quick design-ready assets. It speeds up routine image production so visual teams can create polished content with less manual editing.

Qoder - The Agentic Coding Platform

Qoder is an agentic coding platform that helps developers understand codebases and execute software tasks with AI agents, mainly for professional software engineers and development teams. It improves engineering throughput by combining strong code context with advanced models for more reliable task completion.

Seedance 2.0

Seedance 2.0 is ByteDance's AI video generation model designed to create high-quality videos from prompts and multimodal inputs, mainly for creators, developers, and media teams. In the AI era, it helps visual content roles turn ideas into production-ready motion assets with far less manual editing effort.

Struct | Automate your on-call runbook

Struct is an AI on-call agent that investigates engineering alerts and bugs by analyzing logs, metrics, traces, and codebases, mainly for software engineers and SRE teams. In the AI era, it helps incident responders shorten triage time by delivering root-cause findings and suggested fixes directly in workflows.

Handit.ai — The Open Source Engine that Auto-Improves Your AI Agents

Handit.ai is an open-source optimization engine that evaluates AI agent decisions, generates improved prompts and datasets, and A/B tests changes for teams building and operating AI agents. It helps AI engineers and product teams improve agent quality faster while keeping tighter control over production behavior.

Free AI Grammar Checker - LanguageTool

LanguageTool is an AI-powered grammar and writing assistant that helps users check grammar, spelling, punctuation, and style across more than 30 languages, mainly for students, professionals, and multilingual teams. It helps writing-heavy roles communicate more clearly and edit faster at scale.

Trace

Trace is a software tool designed to support digital workflows, likely focused on helping teams organize, monitor, or analyze work more effectively. In the AI era, tools that centralize operational visibility help technical and business roles make faster decisions with less manual follow-up.

The AI for Problem Solvers | Claude by Anthropic

Claude by Anthropic is an AI assistant for problem solvers that helps users tackle complex work such as writing, coding, data analysis, research, and organizing tasks, mainly for professionals, developers, and teams handling difficult projects. In AI-enabled workflows, it can help knowledge workers and software teams move faster from analysis to execution while keeping people in control of approvals and file access.