Deepnight - Nextgen Night Vision

Rate this Tool

Average Score

Total Votes

Select your score (1-10):

Detail Information

What

Deepnight is a night-vision imaging technology company focused on improving visibility in very low-light environments. Based on the page, it combines AI-based image processing with low-light sensors to turn dark scenes into vivid color and widen nighttime sight into a fuller field of view.

The product appears positioned as an enabling vision system for organizations that operate after dark or in limited-light conditions. The site highlights likely use cases in autonomous vehicle navigation, wildlife surveillance, agricultural monitoring, environmental management, and defense-related safety, suggesting a B2B or infrastructure-oriented offering rather than a consumer device.

Features

- AI-enhanced low-light imaging: Combines AI imaging methods with low-light sensors to improve scene visibility when conventional viewing is limited.

- Colorized night-time output: Presents dark environments in vivid color, which may help operators interpret scenes more easily than with traditional low-light imagery.

- Expanded field of vision: Described as broadening nighttime sight into a full field of vision, supporting wider situational awareness.

- Automatic motion compensation: Algorithms adjust for movement to maintain steadier visibility across changing conditions and terrain.

- Real-time environmental adaptation: Processing adapts instantly to different lighting contexts, including urban light pollution and remote natural darkness.

- Application flexibility: The technology is presented as adaptable across multiple sectors, including autonomy, research, agriculture, environmental monitoring, and safety-oriented operations.

Helpful Tips

- Validate performance by use case: Night-vision requirements differ significantly across vehicles, research, agriculture, and defense, so field testing in the target environment is essential.

- Compare against incumbent systems: If replacing image intensifiers or standard digital cameras, assess differences in latency, clarity, field of view, and operator usability under real conditions.

- Check deployment form factor early: The page describes core imaging capabilities, but it does not specify packaging, hardware interfaces, or integration method, so these should be confirmed during evaluation.

- Review edge-case behavior: Motion handling and adaptation are emphasized, so buyers should examine performance in fast movement, mixed lighting, weather variation, and terrain changes.

- Clarify operational ownership: For multi-team deployments, define whether the system will support human operators, autonomous perception stacks, or both, since each workflow has different reliability and tuning needs.

OpenClaw Skills

Within the OpenClaw ecosystem, Deepnight could likely support skills and agents built around low-light perception workflows. Likely use cases include agents that monitor night-time image streams for anomalies, summarize environmental changes across shifts, classify terrain or objects in low-visibility scenes, or route alerts to operations teams when visibility-based thresholds are met. The site does not state a native OpenClaw integration, so this should be treated as an implementation possibility rather than a confirmed capability.

Combined with OpenClaw, Deepnight could be especially useful in industries where night operations are difficult to scale with human attention alone. Likely workflows include autonomous patrol review for security teams, wildlife observation pipelines for researchers, after-dark crop condition monitoring for agriculture, and machine-vision assistance for mobile systems operating at night. In practice, this pairing could shift low-light imaging from a passive visual aid into an operational decision layer that supports detection, triage, and response.

Embed Code

Share this AI tool on your website or blog by copying and pasting the code below. The embedded widget will automatically update with the latest information.

<iframe src="https://www.aimyflow.com/ai/deepnight-ai/embed" width="100%" height="400" frameborder="0"></iframe>

Explore Similar Tools

Noetic | Get Hardware Compliance Done in Weeks, Not Months

Noetic is an AI-powered hardware compliance platform that helps hardware teams identify applicable regulations, draft technical documentation, and find suitable testing labs faster. For compliance, regulatory, and engineering functions, it can shorten the path from standards research to lab-ready documentation by keeping requirements, documents, and status updates in one place.

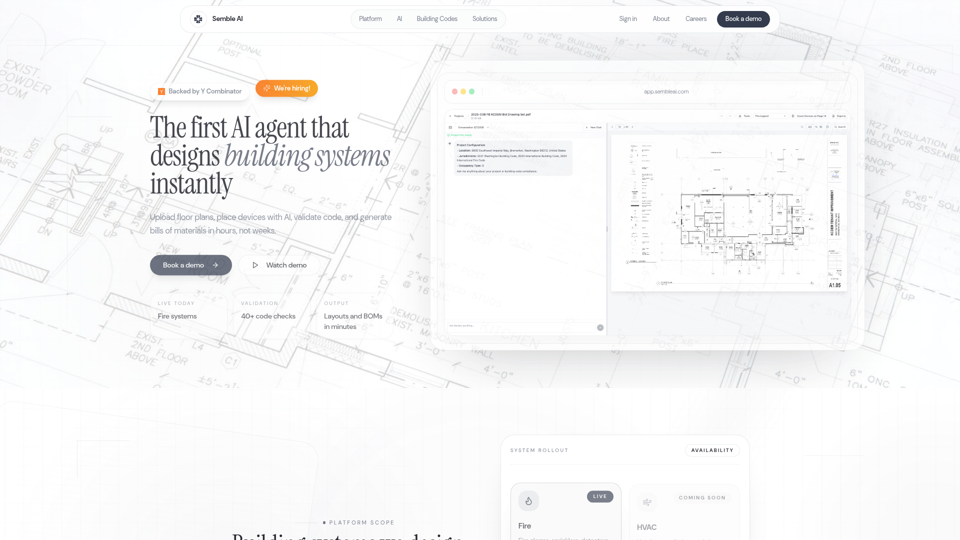

Semble AI - AI-Powered Building System Design

Semble AI is an AI-powered building system design platform that helps engineering and construction teams upload floor plans, place devices, check building-code compliance, and generate layouts and bills of materials, with current live support focused on fire systems. For fire protection designers, MEP engineers, and plan reviewers, it can shorten repetitive code research and drafting work by tying AI-generated layouts to cited code requirements and project documents.

Normal Factory

Normal Factory is a hardware testing and certification platform that helps companies prepare for and manage compliance for standards such as FCC, ISED, CE, and ASTM through pre-compliance software and a step-by-step process, mainly for hardware teams bringing products to market. For hardware, compliance, and operations professionals, it can reduce manual coordination and make certification work faster to review, document, and move toward market approval.

SigmanticAI - Hardware Verification Automation

SigmanticAI is an AI hardware verification automation tool that generates UVM testbenches, constrained stimulus, functional coverage, assertions, and register models for semiconductor design verification engineers working in existing DV flows. For verification and chip design teams, it can reduce manual boilerplate so engineers spend more time reviewing edge cases, improving coverage closure, and validating design intent.

Embedder | AI Firmware Engineer

Embedder is an AI firmware engineering tool that generates, tests, and debugs verified C++ and Rust firmware from datasheets and hardware documents for embedded and firmware engineers working with MCUs and peripherals. By grounding code in source documentation and validating it on simulated and physical hardware, it can help embedded teams reduce datasheet interpretation errors and speed driver development and debugging.

Stillwind

Stillwind is an AI search tool for electrical engineering that helps users find electronic parts with natural language queries by matching detailed specifications against a large parts database, mainly for electrical engineers and embedded software developers. In AI-assisted hardware workflows, it can reduce component research time and improve how engineers and sourcing-related teams translate design needs into precise part selections.

Zettascale

Zettascale is a Silicon Valley hardware company building energy-efficient, reconfigurable XPU chips for AI training and inference, mainly for teams developing advanced AI compute infrastructure. For AI hardware, compiler, and systems engineers, model-optimized dataflow and reduced memory movement can improve throughput while lowering energy use in training and inference workloads.

Silogy

Silogy builds Viv, an on-premise AI verification engineer that analyzes logs, code, waveforms, and test outputs to debug failing digital design regressions faster, mainly for chip developers and verification engineers. For semiconductor verification teams, this can automate repetitive root-cause analysis and speed handoff-ready debug insights while keeping sensitive design data on internal servers.