Reworkd

Rate this Tool

Average Score

Total Votes

Select your score (1-10):

Detail Information

What

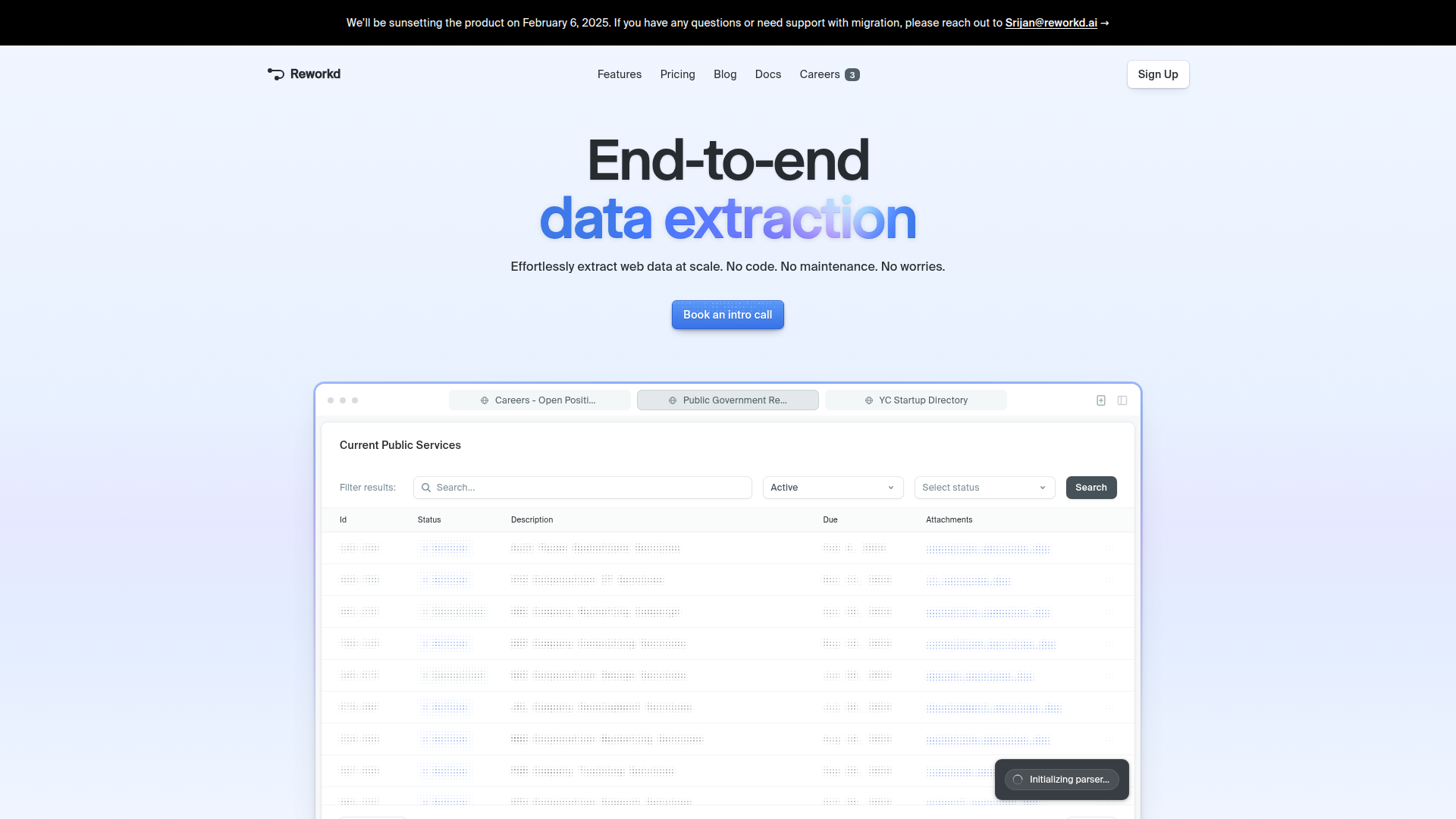

Reworkd is an end-to-end web data extraction product designed to automate collection, parsing, validation, and delivery of data from websites. The page positions it as a no-code system that handles core scraping workflow steps such as scanning sites, generating extraction code, running extractors, and outputting structured results.

It appears to serve teams that need web data at scale without building and maintaining scraping infrastructure internally. Based on the examples shown, likely users include operations, research, data, and commercial teams that monitor public websites, directories, listings, regulations, or documents; however, the page does not define target customer segments in detail. The product is also explicitly being sunset on February 6, 2025.

Features

- Automated extraction code generation — The product states that AI agents understand web pages and generate code to extract the requested data, reducing manual scraper development.

- End-to-end data pipeline automation — Reworkd says it scans websites, runs extractors, validates results, and outputs data in one system, which can simplify multi-step scraping operations.

- Self-healing scrapers — The platform claims it detects website changes and repairs data failures automatically, helping reduce maintenance when source pages change.

- Support for multiple data types — The page says it can retrieve text, images, and documents, which is useful for mixed-content extraction workflows.

- Analytics dashboard — Reworkd presents interactive analytics for tracking what is being extracted, what is working, and what is changing across jobs.

- No-code workflow — The product is described as requiring no code from the user, which likely lowers adoption barriers for non-engineering teams.

Helpful Tips

- Plan for product sunset and migration — Since the product is scheduled to be discontinued on February 6, 2025, any evaluation should focus on migration support, export continuity, and replacement architecture.

- Verify extraction quality on representative sites — For tools in this category, validate performance across pagination, dynamic content, attachments, and site changes rather than relying on homepage claims alone.

- Clarify output formats and operational ownership — The page shows structured outputs but does not fully specify delivery methods, orchestration controls, or downstream integration options, so these areas would need confirmation.

- Test maintenance behavior under real change events — Self-healing claims are valuable, but buyers should examine how failures are surfaced, reviewed, and corrected in production workflows.

- Assess document-heavy use cases separately — The site highlights extraction of documents and public records, so teams working with PDFs or attachments should confirm document parsing depth and metadata handling.

OpenClaw Skills

Within the OpenClaw ecosystem, this kind of product would likely fit as a web data ingestion layer for downstream agents and decision workflows. Likely use cases include agents that monitor public procurement pages, collect regulatory filings, extract structured records from directories, or track changes in listings and attachments, then pass cleaned data into enrichment, classification, or alerting skills.

Because the page does not state a native OpenClaw integration, any connection here is an inferred workflow rather than a confirmed capability. Still, a practical combination could involve OpenClaw agents that schedule extraction jobs, review anomalies, summarize site changes, route documents for analysis, and trigger industry-specific actions for analysts, compliance teams, market researchers, or public-sector intelligence workflows. This would shift work away from manual page checking and brittle scripts toward managed, agent-assisted data operations.

Embed Code

Share this AI tool on your website or blog by copying and pasting the code below. The embedded widget will automatically update with the latest information.

<iframe src="https://www.aimyflow.com/ai/reworkd-ai/embed" width="100%" height="400" frameborder="0"></iframe>

Explore Similar Tools

Bright Data for AI – Connect Your AI to the Web

Bright Data for AI is a web data platform that helps AI teams search, crawl, extract, and collect structured real-time and training data from the web through APIs, remote browsers, datasets, and automation tools. For AI engineers, data scientists, and agent builders, it can reduce the effort of building web access and data acquisition pipelines so they can focus more on model behavior and application logic.

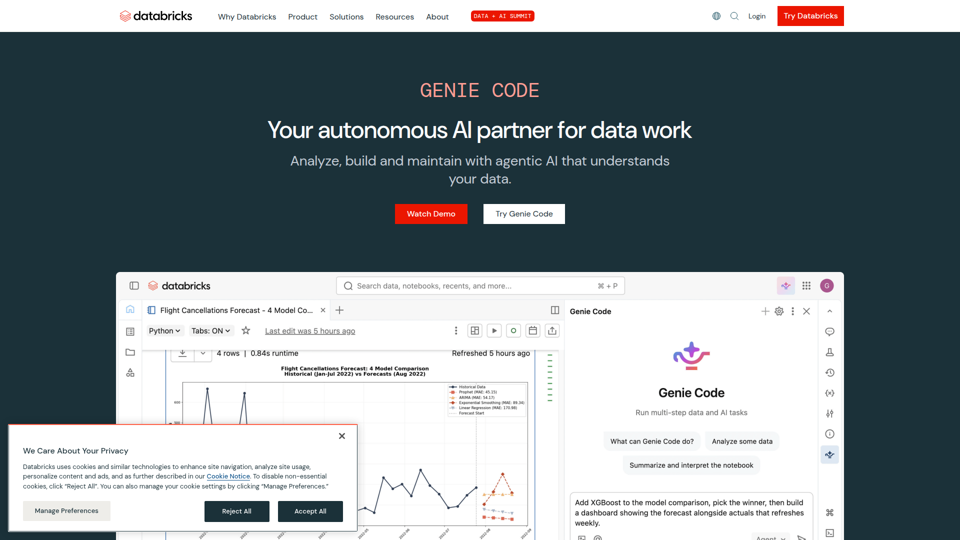

Autonomous AI for Data Teams | Databricks

Databricks Genie Code is an autonomous AI tool in the Databricks workspace that helps data teams plan, execute, and maintain data science, machine learning, data engineering, analytics, and dashboard workflows using natural language and enterprise data context. For data engineers, data scientists, and analysts, it can reduce manual orchestration by grounding work in governed metadata and proactively supporting production pipelines, models, and BI assets.

BlazorData - Home

BlazorData is a Blazor-based data orchestration platform for enterprise-grade data management, transformation, and workflow automation, mainly aimed at teams handling structured data processes in business or technical environments. In AI-era workflows, it can help data and operations professionals organize cleaner, more reliable pipelines that support automation and downstream analysis.

Blackshark.ai - AI Infrastructure for the Physical World

Blackshark.ai is an AI geospatial infrastructure platform that turns satellite, aerial, drone, and sensor imagery into structured world models and simulation-ready 3D environments for government and enterprise teams working with large-scale physical-world data. For geospatial analysts, disaster response planners, and simulation teams, it can speed change detection, situational awareness, and AI training by converting massive imagery streams into operational intelligence.

Homepage | Kubit

Warehouse-native analytics that query Snowflake, Databricks, BigQuery, and ClickHouse directly. Real-time, governed insights with explainable AI.

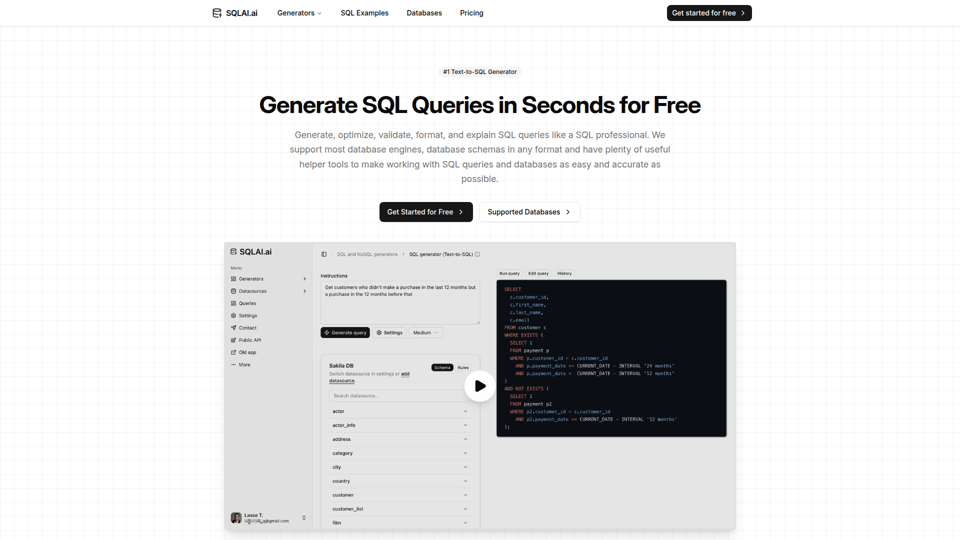

Generate SQL Queries in Seconds for Free - SQLAI.ai

SQLAI.ai is an AI SQL assistant that helps analysts, data engineers, developers, and data teams generate, optimize, validate, format, explain, and run SQL or NoSQL queries from natural language across many database engines. For analytics and engineering work, it can shorten query drafting and review cycles by combining schema-aware generation with validation and readable explanations.

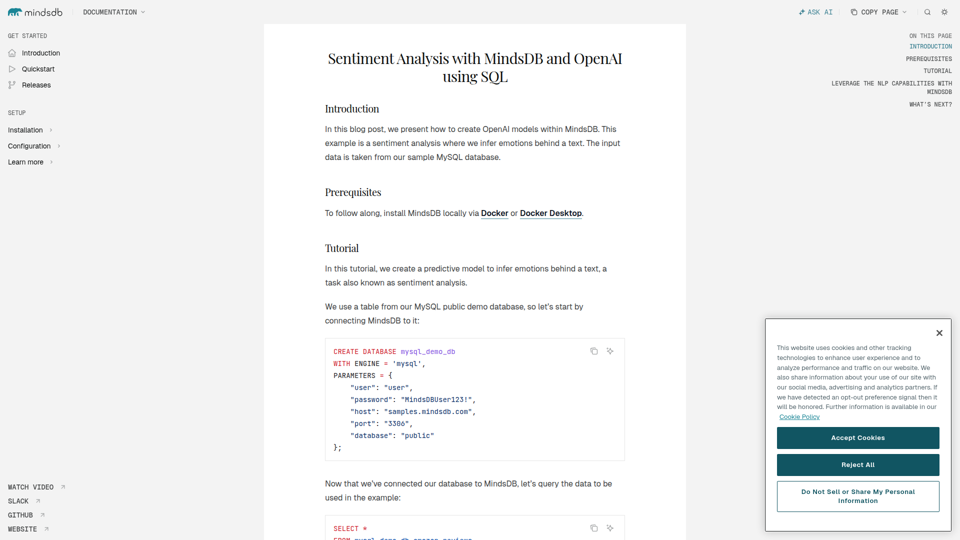

Sentiment Analysis with MindsDB and OpenAI using SQL - MindsDB

This MindsDB tutorial shows developers how to use SQL to create an OpenAI-powered sentiment analysis model inside a database and classify text reviews as positive, neutral, or negative. For data engineers and application developers, this approach can speed up adding AI text analysis to database workflows without building a separate machine learning pipeline.

OSSUS

OSSUS is a self-healing data infrastructure platform that helps organizations turn fragmented records into trusted, agent-ready systems of truth, mainly for teams responsible for data and AI foundations. As AI adoption grows, it can help data, analytics, and engineering professionals improve reliability by giving AI systems cleaner, more dependable information to work from.